Capption vs.

Google Translate

Google Translate's model powers Capption's multilingual translations. But Google's app and Lens are a different story — here's what using them at an exhibit actually looks like.

At a glance

Assistive Technology

Capption

Capption uses Google's translation model to deliver multilingual exhibit content — then goes far beyond it. A single NFC tap delivers that content directly to a visitor's phone: no camera, no OCR, no augmented reality overlay. Text size, contrast, and font are in the visitor's control, and their existing accessibility settings carry over automatically.

General-Purpose Translation Tool

Google Translate + Lens

Google Translate converts text from one language to another. In an exhibit setting, visitors use the companion Google Lens feature to photograph or hover a camera over a label, rely on OCR to recognize the characters, and receive a translated overlay on a live camera feed. It's a multi-tool asked to do a very specific job in a very unforgiving environment.

Google Translate's complicated story, translated

Frustrated with the era's machine translation tools, Sergey Brin helped create Google Translate in 2004 — initially only English ↔ Russian, and terrible at it. The years since brought statistical machine translation (2006), phrase-based translation that mapped sentence fragments instead of individual words, Chrome integration (2010), and Google Lens (2014).

The real leap came in 2016 when Google Neural Machine Translation debuted, translating entire sentences instead of words or phrases. In 2020 the team applied transformer-based machine learning — the same foundational technology behind modern AI — with self-attention mechanisms that weigh the relative importance of each word in context. Accuracy improved dramatically for core languages, and substantially for less-common ones.

Today Translate is easily the best it's ever been. Capption's own primary research routinely finds 80–100% accuracy depending on the language pair — plenty for excellent art, science, and nature exhibit translations. That's why we use the model in Capption. The experience of using Google's apps is a whole other matter.

How visitors actually use it

Some of Capption's very first user testers admitted to being "that person" who photographed every exhibit label in a museum — sometimes translating, sometimes keeping them "just in case." Visitor Experience staff spotted the behavior and assumed translation was an occasional use case, but they could never see what visitors actually did with those pictures.

Google offers no pretense about how to use Translate. It's a multi-tool; the experience is your choice. In theory, scanning and translating an exhibit label looks straightforward. In practice, the real workflow unfolds like this:

- Encounter an exhibit label near an exhibit

- Skim it to determine what language it's in

- Decide whether it's worth pulling out your phone to know what it says

- Whip out your phone and unlock it

- Open Google Translate or Google Lens

- Select the Camera function (Google Lens) within Translate

- Frame the label text in the viewfinder

- Photograph for a static translation, or hold the camera perfectly steady for a live one

- Lens's OCR algorithm identifies characters and converts them to a translatable text string

- Translate translates the string

- Lens overlays translated text atop the original in augmented reality

- Read the translated text on the live camera feed

Visitors do get their translation eventually. But they tread an undignified path to get there.

Why it's so hard in a real museum

Eight compounding friction layers — any single one is manageable; together they become a barrier

Physically tethered to the label

Lens overlays translations onto a live camera feed, physically tethering the visitor to the exhibit label. They must stand directly in front of the placard for as long as it takes to read — blocking other visitors, feeling anxious about being in the way, and suffering physical fatigue from holding a camera steady while splitting attention between the text and the exhibit itself.

Shaky text, changing translations

Holding a phone in perfect orientation for a live translation forces fatigue and shaky hands. The AR text overlay jumps around on screen. Worse, as OCR re-scans the label frame by frame, the translations change — creating genuine doubt about what the label actually says.

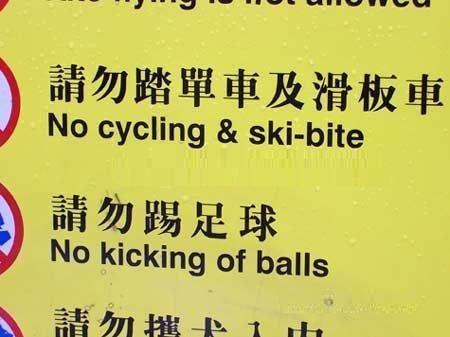

Museum environments break OCR

Dim lighting, spotlights creating glare on glossy placards, curved displays, and text printed on glass cases all degrade the camera's ability to recognize characters. Museums are notoriously hostile to OCR — which is the entire foundation Lens and Translate are built on.

Size constrained to the label

Lens overlays translated text within the visible physical dimensions of the printed label. Visitors cannot adjust line height, switch to a more legible font, or use a screen reader against a live camera feed. Whatever size and layout the label was printed in, that's what the translation inherits.

Not built for accessibility

Google Translate and Lens are general-purpose tools. They don't help non-sighted visitors. They don't meaningfully help most low-vision visitors. They actively impair elderly and low-mobility visitors who struggle with camera precision and sustained phone-holding. Translation is there; accessibility is not.

Nothing to take home

Live translations have no permanence. After closing Lens, visitors are left with nothing referenceable — no history, no saved content, no way to revisit what they read at exhibit 7 while standing at exhibit 12. Translate requires connectivity throughout; stepping out of range ends the experience.

No translation curation

Google Translate relies entirely on machine output. Exhibit content authors who want to refine a specific phrase in a specific language have no mechanism to do so — whatever the machine produces is what visitors receive.

Tolerance stacking

For the median visitor, any single problem won't critically impede their experience. Put a few together and stress rises noticeably. Translate and Lens create the user experience equivalent of tolerance stacking — compounding small imperfections into a genuinely frustrating whole.

What Capption gives visitors that Google Translate and Lens can't

Translate and Lens fulfill a broad, general purpose: converting language X to language Y. Capption is an assistive technology meant to help exhibitors include low-sighted, aging, and non-native speaking visitors. Capption uses Google Translate's model for many translations — then builds an entirely different experience around it:

- Tap-to-acquire. Vastly improved over photography (which isn't accessible) and OCR (which isn't reliable). In testing, visitors access translated content via Capption 5–10× faster than via Lens, with greater accuracy.

- Size unconstrained. Translate and Lens operate in a 1-for-1 paradigm — content is constrained by the physical dimensions of the label. One tap on a 2″ × 2″ Capption sticker could deliver the New Testament.

- Genuine accessibility. Translate was never purpose-built for accessibility. In many cases, the wall label being translated wasn't readable in the first place — Lens won't help that. Capption visitors adjust font size, line height, and contrast, and native OS screen readers work against translated text.

- Palpable performance. Translate and Lens compute and recompute OCR, translation, and image overlay on every frame. In testing, most users finish reading their Capption content before completing Translate's acquisition workflow.

- Improved accuracy. Capption doesn't use OCR, eliminating visual misreads. Capption also declares the source language explicitly, eliminating the risk of second- and third-degree translation errors.

- Translation curation. If a Capption administrator writes a hand-crafted Thai translation, Capption recognizes it and doesn't machine-translate over it.

- Language-by-language translator selection. Google Translate isn't the best machine translator for every language pair. Capption switches between translation services on a language-by-language basis.

- Content mobility. Once a visitor taps the tag, that content sits on their device. They can step back, sit on a bench, and read at their own pace in their own comfort zone — fully decoupled from the physical label.

- Supporting media. Any Capption can include images, videos, audio files, and external links. Exhibit labels can't.

- Visit records. Capption automatically generates an exhibit-centric history visitors can take home and reference later. Translate's live overlay disappears the moment you put your phone down.

Importantly, Capption does all of this without intermingling visitors' personal data with third-party surveillance systems, ad targeting, or privacy-eroding AI training.

Pricing

Google Lens and Translate are free. But free software comes at a different cost: ad tracking, heavy bandwidth use, user and content data harvesting — topped off by a clumsy experience with near-zero purpose-built accessibility.

Capption prices based on institution visitor volume with minimal implementation and upkeep effort. Talk to us about what that looks like for your institution.

How to decide

Google Translate and Lens are not accessibility tools — they handle translation slowly and ponderously, for a general audience in a general context. Capption was purpose-built to help exhibiting institutions include and serve new audiences. That mission happens to include much faster, more readable translation because Capption sidesteps OCR, rendering constraints, size limitations, and every accessibility gap in Translate and Lens entirely.

The practical guidance

If your institution merely requires translation and doesn't care about usability or accessibility, put up signs about Lens and Translate and call it a day. If you're building or improving a top-notch visitor experience, Capption delivers state-of-the-art accessibility while provably reducing the digital friction visitors loathe.

See Capption in action

Capption combines translation, accessibility, rich media, and visit take-aways into a single tap. Try it yourself.